Why SOC analysts get inconsistent results from ChatGPT (and how structured workflows fix it)

If you've ever handed a security alert to ChatGPT and gotten a different answer each time — you've hit the real problem. It's not the model. It's the prompt. Most analysts paste an alert and ask "w...

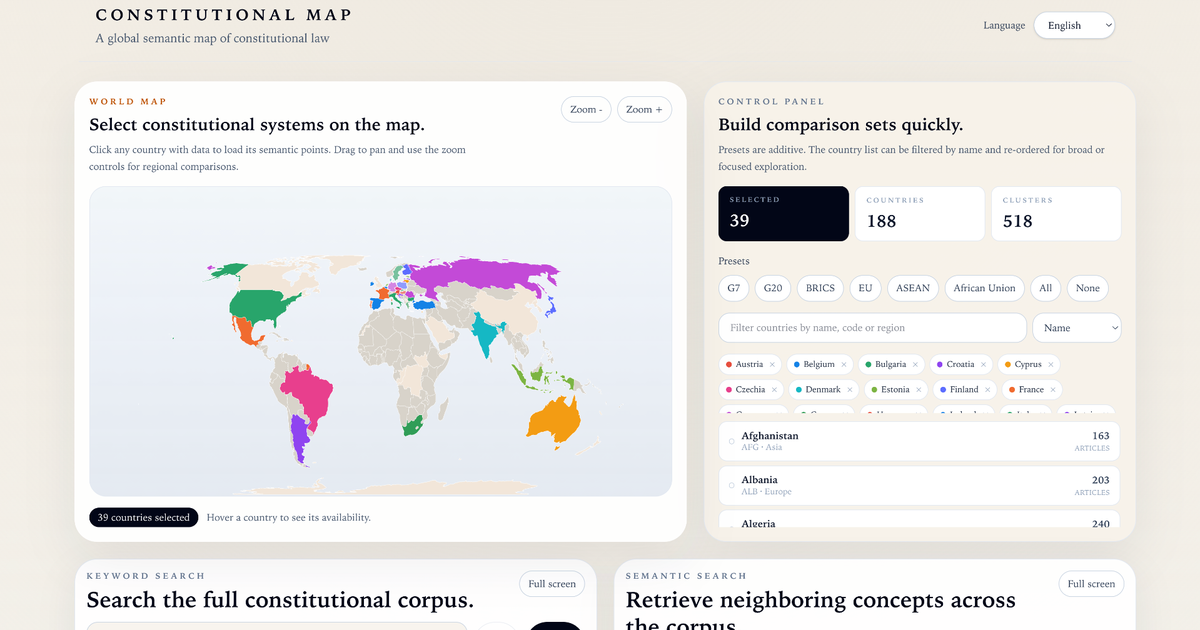

Source: DEV Community

If you've ever handed a security alert to ChatGPT and gotten a different answer each time — you've hit the real problem. It's not the model. It's the prompt. Most analysts paste an alert and ask "what do you think?" That's like asking a junior analyst to investigate without a runbook. You'll get something back, but the quality depends entirely on how the question was framed. The real problem: no structure Experienced SOC analysts don't wing investigations. They follow a process: Triage the alert Map to MITRE ATT&CK Check for lateral movement Build a containment recommendation Write a ticket summary The issue is that most AI-assisted workflows skip steps 2–5 and jump straight to "is this bad?" What I built I spent time building SOC.Workflows — a free collection of structured investigation workflows for SOC analysts. Each workflow breaks an investigation into 4 steps, with specific prompts for each step, designed to run in ChatGPT or Claude. Current workflows: Phishing Email Investig