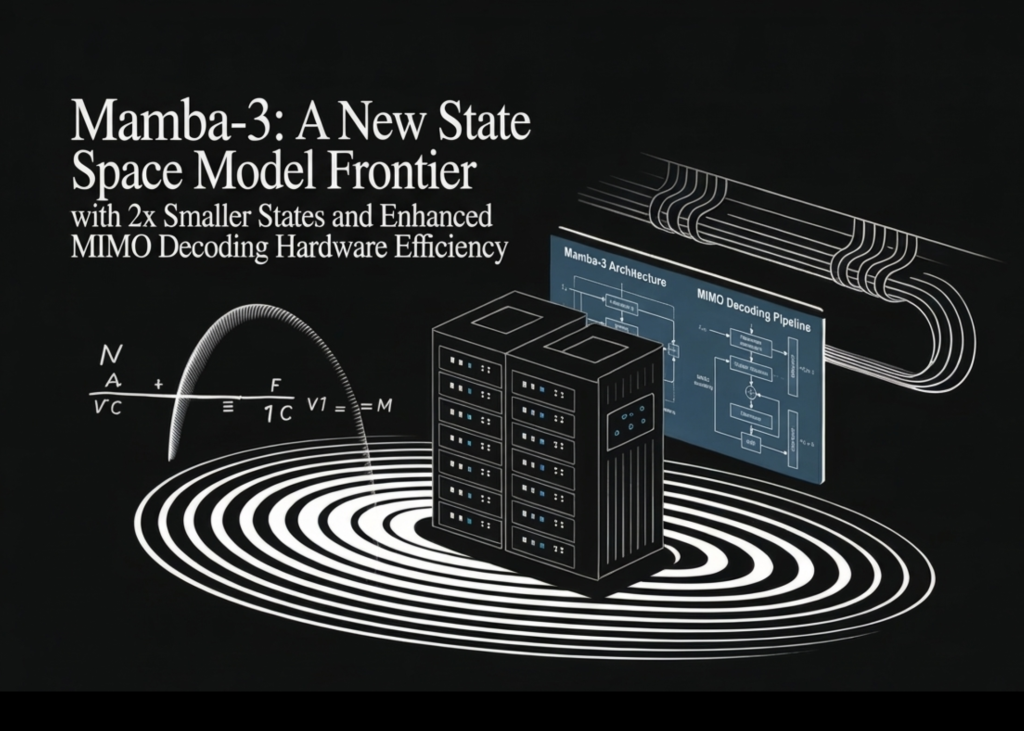

Meet Mamba-3: A New State Space Model Frontier with 2x Smaller States and Enhanced MIMO Decoding Hardware Efficiency

The scaling of inference-time compute has become a primary driver for Large Language Model (LLM) performance, shifting architectural focus toward inference efficiency alongside model quality. While...

Source: MarkTechPost

The scaling of inference-time compute has become a primary driver for Large Language Model (LLM) performance, shifting architectural focus toward inference efficiency alongside model quality. While Transformer-based architectures remain the standard, their quadratic computational complexity and linear memory requirements create significant deployment bottlenecks. A team of researchers from Carnegie Mellon University (CMU), Princeton University, Together […] The post Meet Mamba-3: A New State Space Model Frontier with 2x Smaller States and Enhanced MIMO Decoding Hardware Efficiency appeared first on MarkTechPost.