How I Built a Monitoring Stack That Actually Catches AI Agent Failures Before They Cost Money

How I Built a Monitoring Stack That Actually Catches AI Agent Failures Before They Cost Money Most monitoring tools for AI agents are useless. They tell you that something failed, not why — and by ...

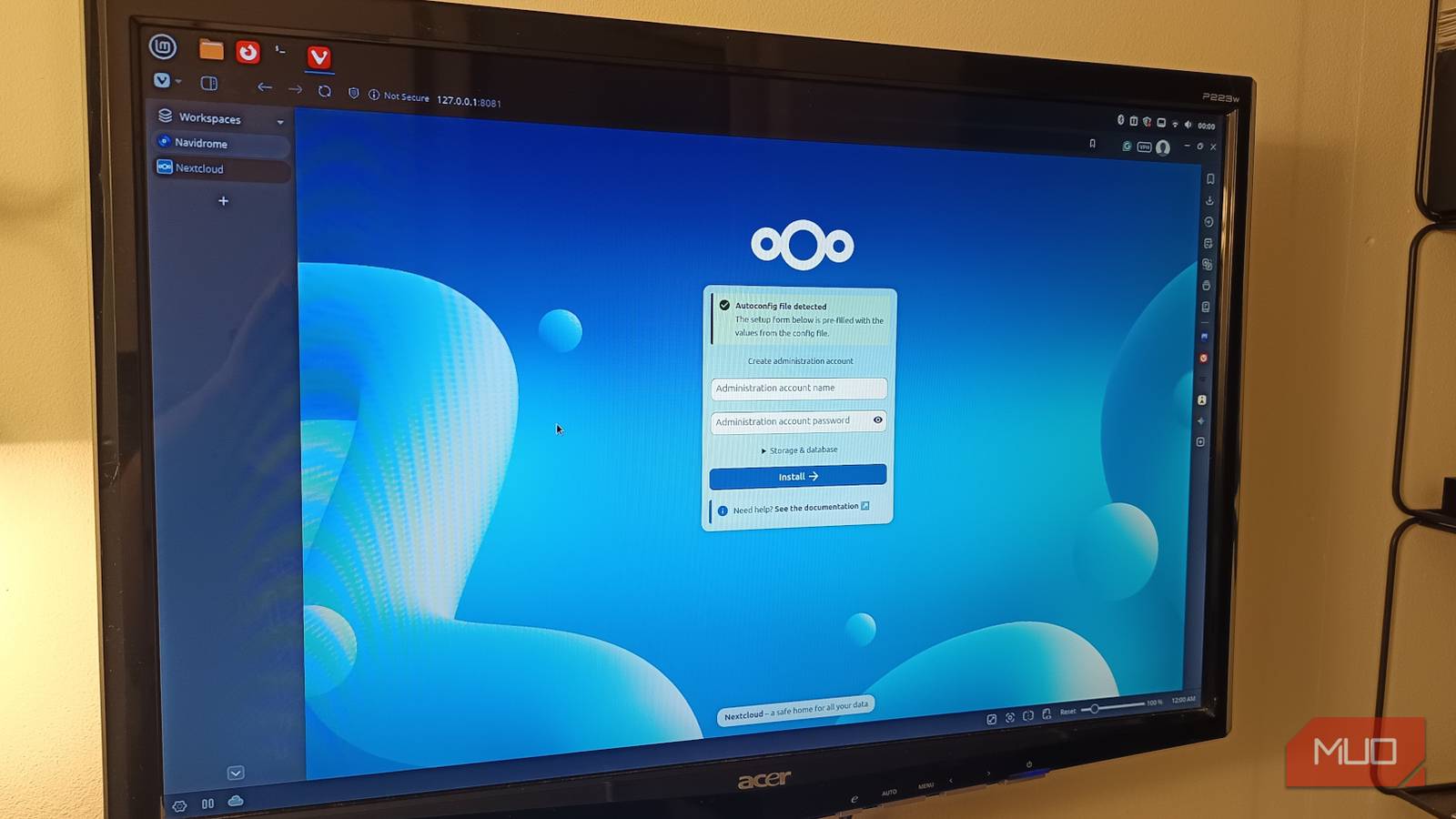

Source: DEV Community

How I Built a Monitoring Stack That Actually Catches AI Agent Failures Before They Cost Money Most monitoring tools for AI agents are useless. They tell you that something failed, not why — and by the time you find out, you've already burned through your API budget. After watching my agents cost me thousands in failed retries and hallucinations, I built a monitoring stack that catches failures in real-time. Here's the technical architecture. The Three Failure Modes Most Tools Miss Your agent isn't failing in one way. It's failing in three: Silent Drift — The agent keeps producing output that looks correct but drifts from the actual goal over time Confidence Inflation — The agent reports high confidence while producing garbage Cost Escalation — Retry spirals that explode your budget without any visible error Standard observability tools don't catch any of these. Here's what does. The Monitoring Architecture ┌─────────────────────────────────────────────────────────┐ │ Agent Execution │